An NTN system draws timing inputs from multiple sources. UE’s including IoT devices synchronise to GPS time via GNSS receivers. Orbital data and ephemeris predictions are commonly delivered in UTC. Computer infrastructure may internally timestamp in TAI. Operators schedule beams and analyse logs in UTC or local time. Each of these time systems is offset from the others by seconds, not milliseconds, and the offsets are not fixed. When an engineer feeds an orbital input stamped in UTC into a calculation that assumes GPS time, the result is wrong by 18 seconds. The system will not flag this. There is no format error, no failed parse, no exception. The numbers simply describe a satellite that is not where the system thinks it is.

This is a structural integration problem. In NTN deployments where timing precision directly governs positioning accuracy, scheduling, and link acquisition, an undetected offset of this magnitude causes real failures that present as something else entirely.

The time systems and their offsets

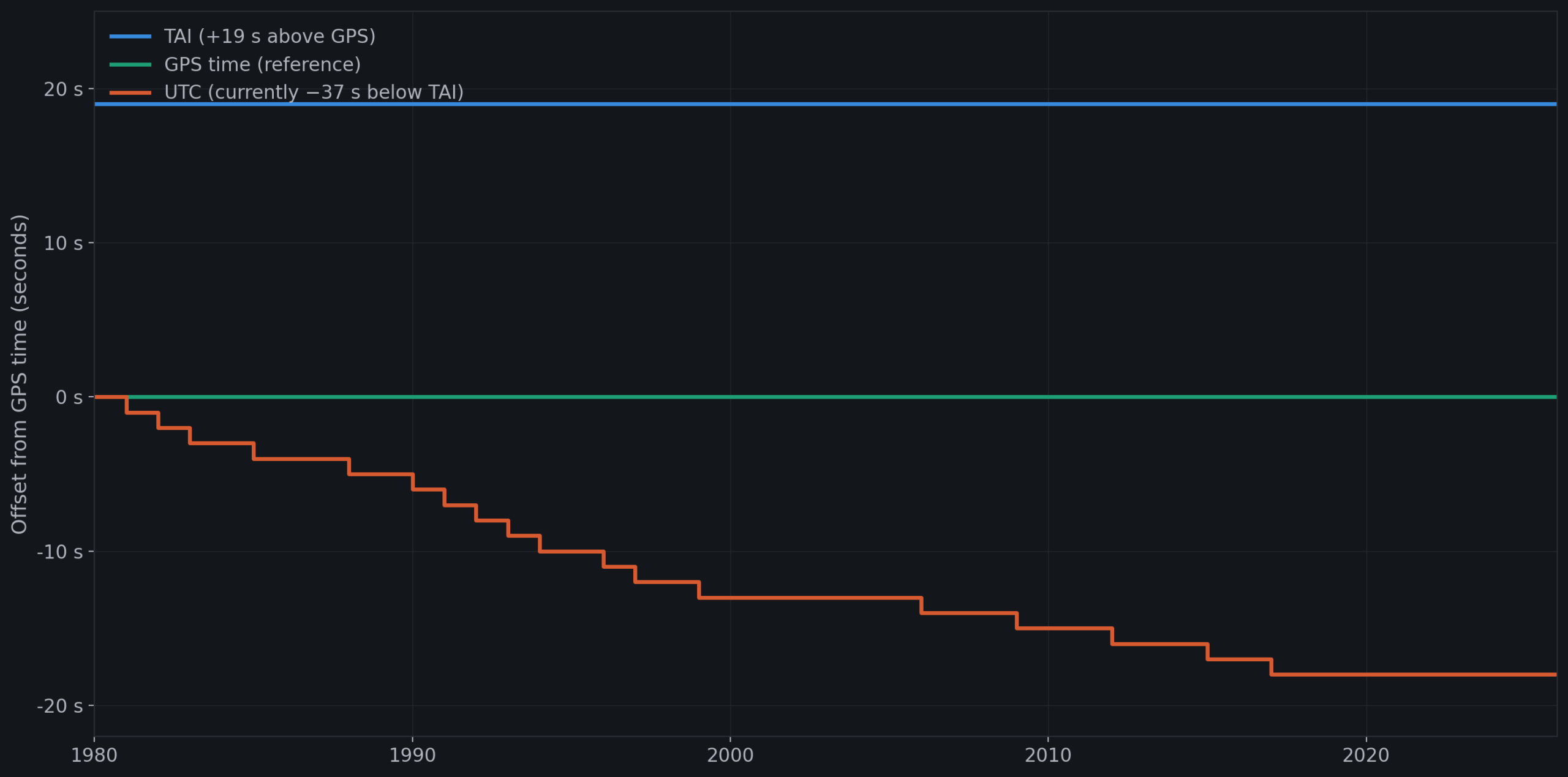

GPS time is a continuous atomic timescale with an epoch of midnight UTC on 6 January 1980. It does not incorporate leap seconds. The offset between TAI and GPS time is exactly 19 seconds, fixed by definition at the GPS epoch.

TAI, International Atomic Time, is the underlying atomic reference derived from approximately 400 atomic clocks worldwide. It is also continuous and does not include leap seconds.

UTC is derived from TAI by subtracting an integer number of leap seconds, introduced by the International Earth Rotation and Reference Systems Service to keep UTC aligned with the Earth’s rotation. Since January 2017, the offset has been TAI minus UTC equals 37 seconds, with no additional leap second introduced through early 2026.

From these definitions: GPS time currently leads UTC by 18 seconds, TAI leads UTC by 37 seconds, and GPS time trails TAI by exactly 19 seconds. Wall clock or local time adds timezone offsets on top of UTC, introducing further displacement depending on the operator’s location.

These are precise, deterministic offsets that propagate through every calculation they touch.

Why NTN makes this worse

In terrestrial cellular networks, timing mismatches of this kind are less likely to cause operational failures. Base stations and devices are close together, propagation delays are small, and synchronisation infrastructure is tightly controlled within a single operator’s domain.

NTN changes every part of this. Propagation delays to LEO satellites are on the order of tens of milliseconds. For GEO constellations, the radio link round-trip time between device and ground station via the satellite reaches approximately 240 milliseconds. Timing advance values in NTN reach magnitudes well above terrestrial values. Doppler shifts from LEO orbital velocities can exceed tens of kilohertz. Beam footprints move continuously, and scheduling must account for satellite position at future points in time.

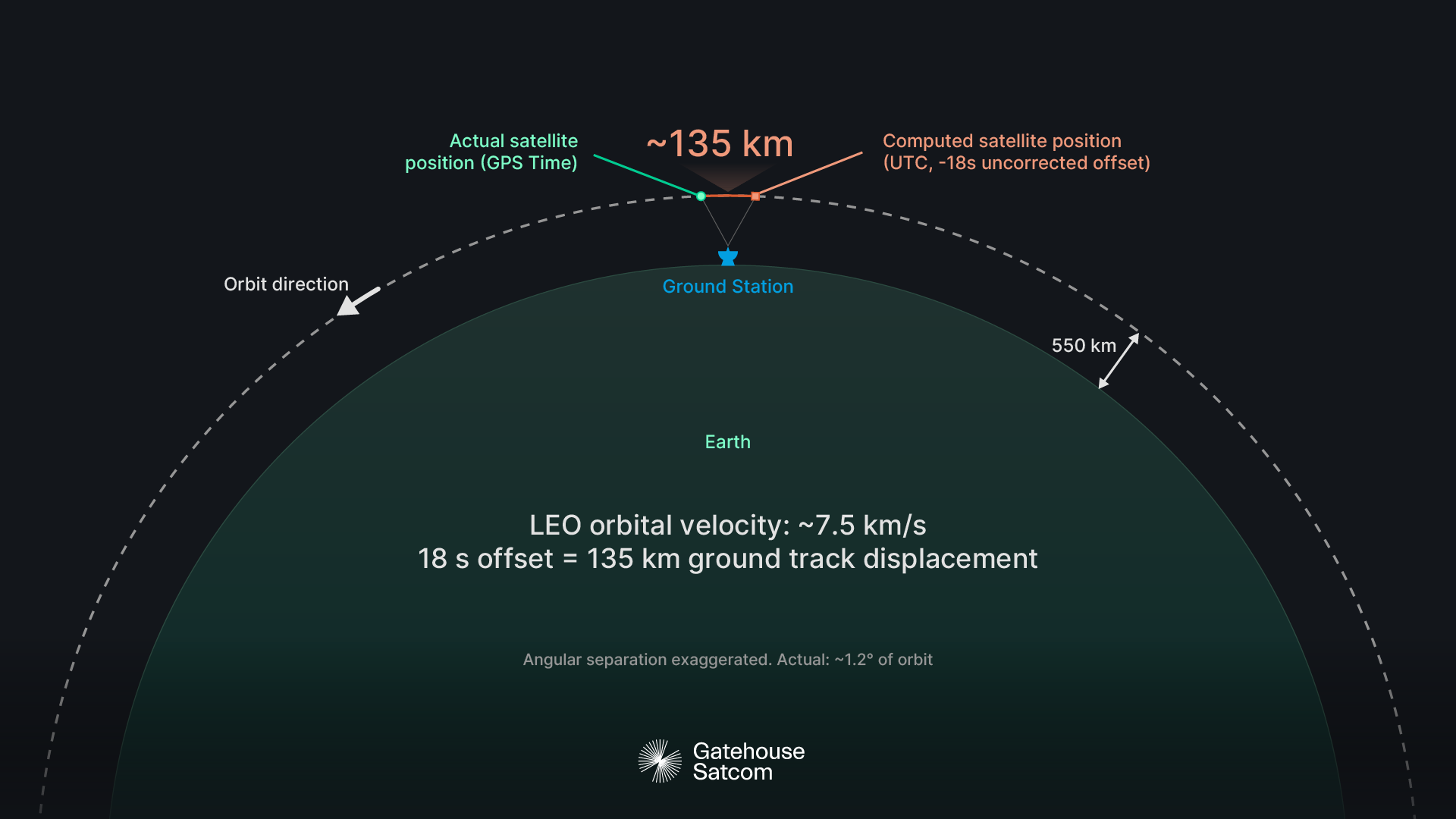

All of these depend on knowing where the satellite is and when. Orbital mechanics calculations consume ephemeris data that describes satellite position as a function of time. If the time system of the ephemeris does not match the time system of the consumer, every derived quantity shifts by the full offset: satellite position, velocity, Doppler prediction, beam pointing, and timing advance pre-compensation. An 18-second error in a LEO orbit corresponds to a ground track displacement of roughly 135 kilometres. The satellite is simply not where the system computed it to be.

This failure mode is silent. The ephemeris data is structurally valid. The computation executes without error. The outputs are within plausible numerical ranges. The predicted satellite position is wrong, and every downstream function that depends on it degrades or fails: beam scheduling, Doppler pre-compensation, timing advance calculation.

Where the mismatches occur in practice

The clearest example is the interface between UE’s and orbital data. A device acquires time from its GNSS receiver, which operates in GPS time. GPS satellites broadcast a UTC offset parameter in the navigation message, allowing receivers to compute UTC if required, but the native timescale is GPS time.

Orbital data, however, is commonly delivered and stored in UTC. Ephemeris predictions, satellite position tables, and beam scheduling plans are typically timestamped in UTC because that is the convention in the space operations domain and the standard reference for most ground infrastructure.

When a system consumes UTC-stamped ephemeris data and correlates it against a GPS-time device clock without applying the 18-second correction, the satellite position used for Doppler pre-compensation and timing advance is evaluated at the wrong epoch. The device attempts to acquire a signal based on parameters derived from a satellite position that is 18 seconds stale or 18 seconds in the future. In a LEO scenario, this can mean the satellite has moved well outside the angular range where the computed parameters are valid.

The symptom an engineer sees is a failed random-access attempt, a synchronisation signal that appears weak or absent, or a Doppler pre-compensation that does not converge. The failure presents as a radio problem or a link budget issue, and nothing in the diagnostic output points to a time system mismatch.

A second common mismatch occurs in validation and test environments. Some timing libraries and infrastructure components can be configured to use TAI or may default to an atomic timescale without leap second adjustment. If those logs are compared against device behaviour in GPS time, there is a 19-second offset. If compared against scheduled events in UTC, there is a 37-second offset. Correlation across these logs can produce apparent timing anomalies, false latency measurements, or misattributed failure sequences. An 18- or 37-second shift in log alignment can make a correctly timed event appear to have occurred outside its valid window or mask a genuine timing fault by shifting it into an apparently benign region.

A third area involves leap second handling. The GPS-UTC offset is not permanently 18 seconds. It changes each time a leap second is introduced. Leap seconds are announced by the IERS at least six months in advance, so the change itself is predictable. The risk is that software carrying a hardcoded offset value is not updated when the change takes effect. GPS satellites broadcast the current offset, and well-implemented receivers apply it automatically. But software that hardcodes the offset, or databases that store a GPS-UTC conversion factor without updating it, will silently drift by one second each time a leap second is added. Systems validated before a leap second event may fail after one without any configuration change, and the failure will not reference leap seconds in any log or diagnostic output.

Mitigation in practice

The ideal state is for all components in an NTN system to operate in a single time system. GPS time is the natural candidate, given that devices inherently synchronise to it. In practice, this is not always achievable. Orbital data suppliers, ground segment infrastructure, core network elements, and operational tools each have their own conventions, and changing them is not always within the integrator’s control.

What is within the integrator’s control is explicit time system labelling and conversion at every interface. Every timing input should carry an unambiguous declaration of its time system. Every handoff between components operating in different time systems should apply the correct offset, sourced from a maintained reference rather than a hardcoded constant. Validation should include deliberate offset injection, testing the system’s behaviour when a timing input arrives in an unexpected time system, to confirm that the mismatch is detected rather than silently absorbed.

The Gatehouse Satcom perspective

At Gatehouse Satcom, time system alignment is a practical integration concern encountered across NTN programmes. Working with orbital data, UE timing, and satellite system integration means that the boundary between GPS time, UTC, and TAI is crossed routinely. We have seen how an ephemeris input delivered in one time system and consumed in another produces failures that surface as radio or scheduling anomalies rather than timing errors. Identifying and resolving these mismatches is a standard part of our integration and validation work, and it is one of the areas where experience across multiple NTN system configurations provides direct engineering value.

Our test environments are designed to make time system assumptions explicit and to surface mismatches before they reach deployment. The cost of finding them in the field is measured in debugging weeks spent looking in the wrong place.

The offset between GPS time, UTC, and TAI is deterministic, well-documented, and straightforward to correct. Nothing in the system signals when the correction has not been applied. In NTN, where every timing input feeds directly into positioning, scheduling, and synchronisation, an undetected 18-second offset produces a deployment that fails for reasons that appear unrelated to timing.

On the 3GPP air interface, timing is derived from GPS time. The network broadcasts the current leap second offset to devices via system information, enabling UTC derivation. The air interface itself operates on a GPS-based timescale. The GPS-UTC boundary is therefore crossed at a defined point in the architecture. Treating time system alignment as an explicit integration requirement, rather than an assumed detail, starts with knowing where that boundary sits.

Claus Siggaard Andersen

VP of 5G Engineering, Gatehouse Satcom

Claus Siggaard Andersen is VP of 5G Engineering at Gatehouse Satcom, leading the engineering team behind next-generation satellite communication technology. With over 25 years of experience spanning telecom, IT modernization, and aerospace, he combines deep technical expertise with proven leadership in delivering complex, large-scale programs across international organizations.